The characters in my latest android story did not want to quit. It took longer than I anticipated to tie up loose ends and bring the thematic material to a conclusion. I ended at 77,500 words, about what I’d had in mind. Two to three thousand words will come out with the next wall-to-wall edit, but I often have to add a paragraph here, a scene there, so I expect to net around 77K.

The characters in my latest android story did not want to quit. It took longer than I anticipated to tie up loose ends and bring the thematic material to a conclusion. I ended at 77,500 words, about what I’d had in mind. Two to three thousand words will come out with the next wall-to-wall edit, but I often have to add a paragraph here, a scene there, so I expect to net around 77K.

Is it any good?

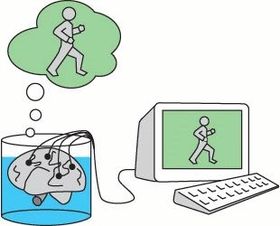

I think it says what I wanted to say, at least. I wanted to ask the question, must human consciousness be embodied – either in flesh or silicon? How would consciousness behave if it weren’t embodied? And how would both human and android consciousness react to that? I’m satisfied with that exploration.

In my recent autodidactic sci-fi reading I’m not finding, so far, many writers exploring such questions seriously. I’ve found some good discussions of consciousness in Peter Watts’ Blindsight, and in Luisa Hall’s Speak. Watts, for example, brings up some interesting linguistic ideas that indirectly invoke Chomsky’s 1950’s generative grammar, and he discusses Searle’s 1980 thought-experiment of the Chinese Room. Those are worthy topics, but they’re only represented in hand-waving, not fully explored. Still, I’m glad to see any serious discussion of consciousness in popular fiction.

Hall’s story invokes the Turing test (the “imitation game”), but again, without unpacking its many complex implications. She also develops in some detail the attribution problem, in which humans readily attribute consciousness to any speaker who seems linguistically competent. Without naming the source, she invokes the tone and style of Weizenbaum’s ELIZA program of 1955, an algorithmic non-directive therapist that many people (college students) believed was sentient because it echoed keywords of their statements. Frustratingly, Hall exploits the attribution problem without addressing it.

Those are just two examples I happen to be aware of right now of popular fiction that seriously explores the nature of consciousness. Most sci-fi about AI is too superficial to be taken seriously. (Dick’s Do Androids Dream of Electric Sheep? is an exception).

My story gets into the deep weeds of consciousness pretty quickly. I question, for example, the organic emergence of tribal feeling out of tacit intersubjectivity. I could have pursued topics like that further, but I reminded myself that I was already pretty weedy, so I got out as soon as my characters allowed it.

Is there a readership that will eschew ships to outer space for inner exploration of consciousness? Don’t know.